Hierarchical Planning for Rope Manipulation using Knot Theory and a Learned Inverse Model

CORL 2023

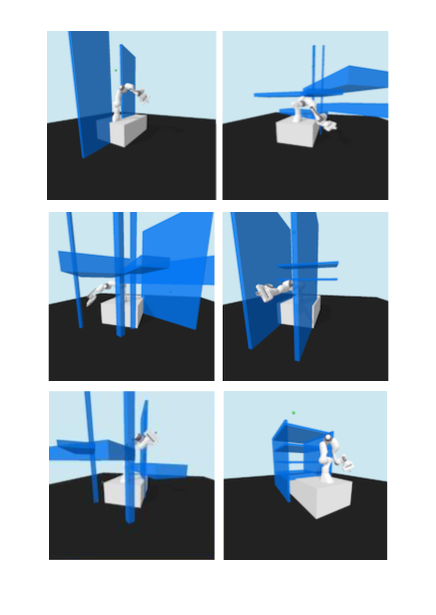

This work considers planning the manipulation of deformable 1-dimensional objects, such

as ropes or cables, specifically to tie knots. We propose TWISTED: Tying With Inverse

model and Search in Topological space Excluding Demos, a hierarchical planning approach

which, at the high level, uses ideas from knot-theory to plan a sequence of rope

configurations, while at the low level uses a neural-network inverse model to move

between the configurations in the high-level plan. To train the neural network, we

propose a self-supervised approach, where we learn from random movements of the rope.

To focus the random movements on interesting configurations, such as knots, we propose

a non-uniform sampling method tailored for this domain. In a simulation, we show that

our approach can plan significantly faster and more accurately than baselines. We also

show that our plans are robust to parameter changes in the physical simulation,

suggesting future applications via sim2real.